Publication

Publication

Publication

Impact of Cultural-Shift on Multimodal Sentiment Analysis

Impact of Cultural-Shift on Multimodal Sentiment Analysis

Tulika Banerjee, Niraj Yagnik, Anusha Hegde | Manipal Institute of Technology

Tulika Banerjee, Niraj Yagnik, Anusha Hegde | Manipal Institute of Technology

Tulika Banerjee, Niraj Yagnik, Anusha Hegde | Manipal Institute of Technology

Presented at The Sixth International Symposium on Intelligent Systems Technologies and Applications (ISTA'20)

Published: 17 November 2021, Journal of Intelligent & Fuzzy Systems

Presented at The Sixth International Symposium on Intelligent Systems Technologies and Applications (ISTA'20)

Published: 17 November 2021, Journal of Intelligent & Fuzzy Systems

Presented at The Sixth International Symposium on Intelligent Systems Technologies and Applications (ISTA'20)

Published: 17 November 2021, Journal of Intelligent & Fuzzy Systems

Abstract

Abstract

Human communication is not limited to verbal speech but is infinitely more complex, involving many non-verbal cues such as facial emotions and body language.

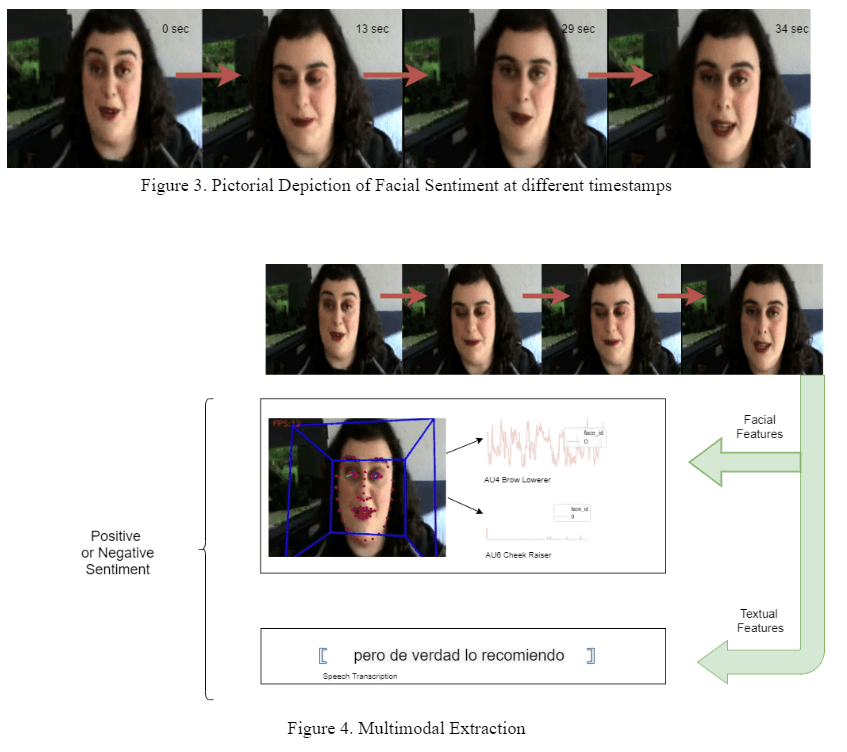

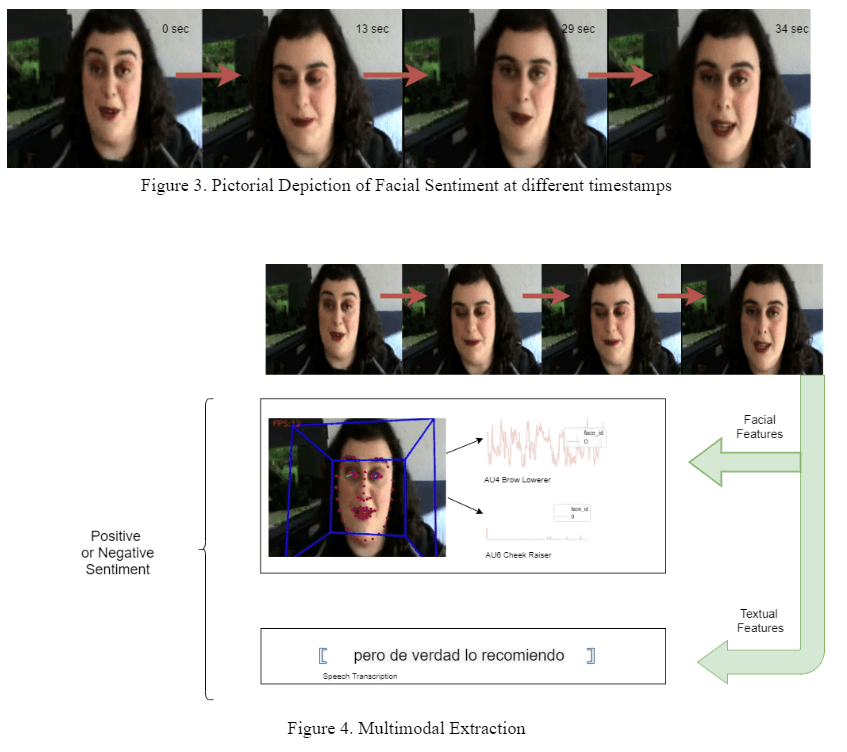

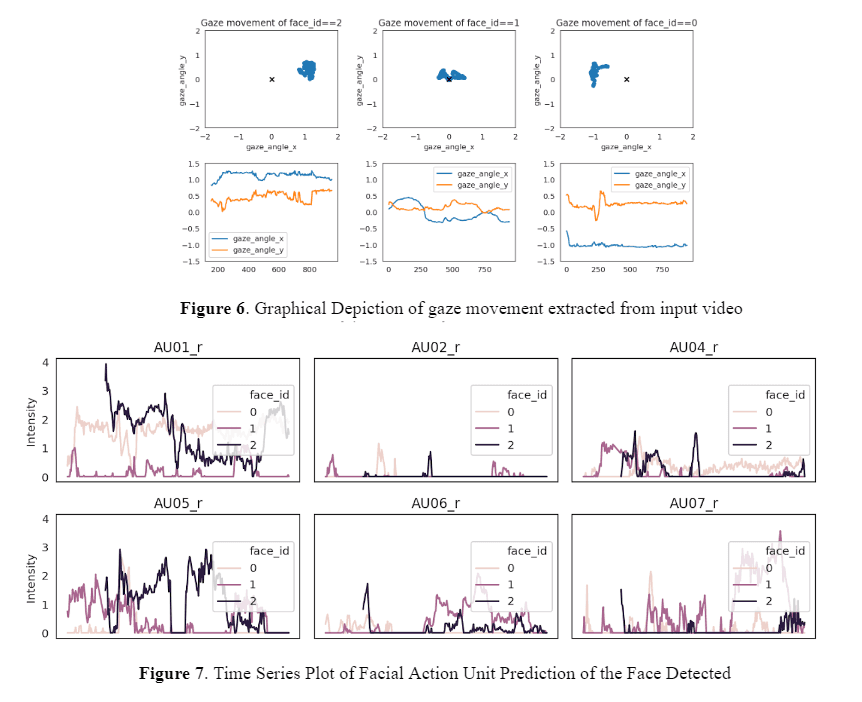

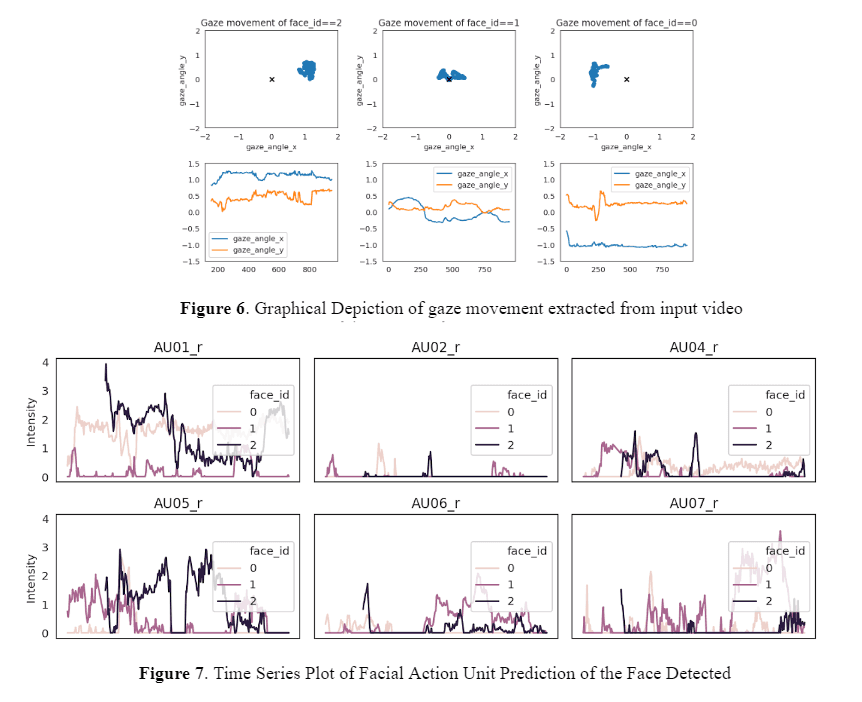

This paper aims to quantitatively show the impact of non-verbal cues, with primary focus on facial emotions, on the results of multi-modal sentiment analysis.

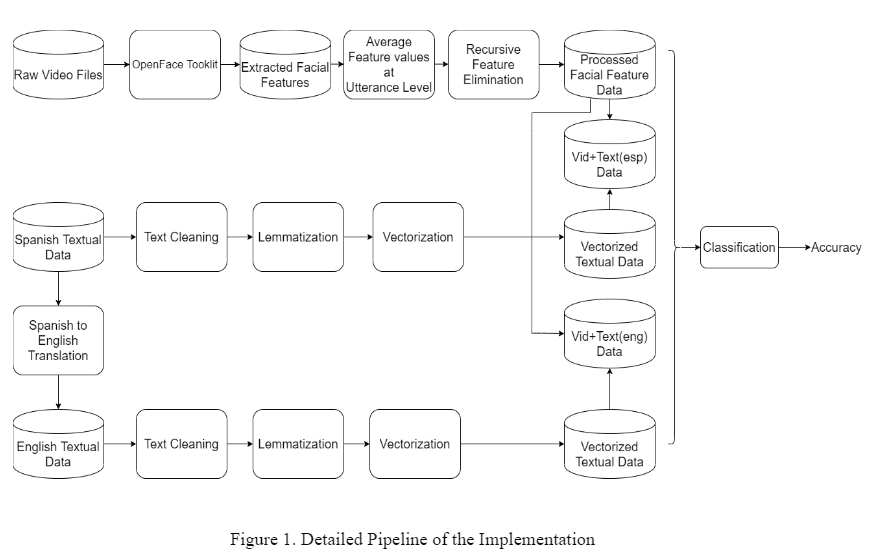

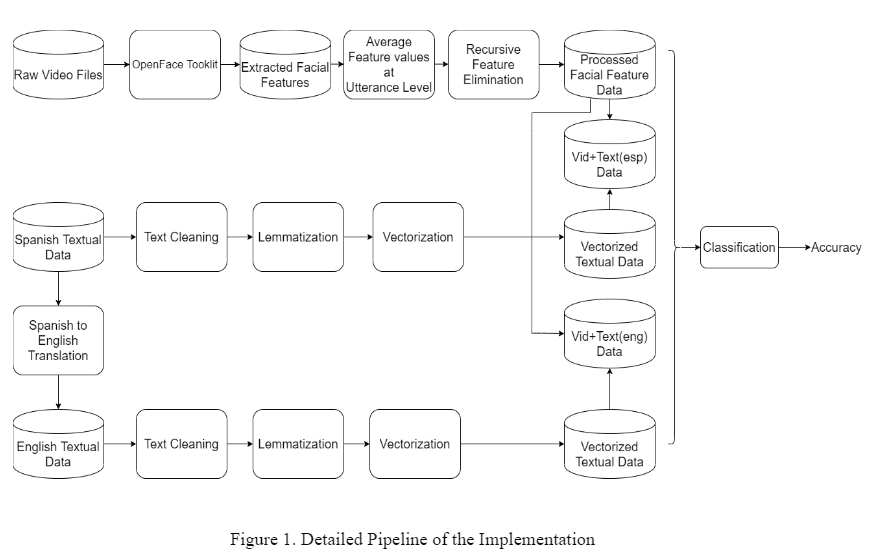

The paper works with a dataset of Spanish video reviews. The audio is available as Spanish text and is translated to English while visual features are extracted from the videos. Multiple classification models are made to analyze the sentiments at each modal stage i.e. for the Spanish and English textual datasets as well as the datasets obtained upon coalescing the English and Spanish textual data with the corresponding visual cues.

The results show that the analysis of Spanish textual features combined with the visual features outperforms its English counterpart with the highest accuracy difference, thereby indicating an inherent correlation between the Spanish visual cues and Spanish text which is lost upon translation to English text.

Keywords

Keywords

Keywords

Multimodal analysis, natural language processing, non-verbal cues, classification algorithms, cultural-shift

Access

Access

Access

DOI: 10.3233/JIFS-189870

Journal: Journal of Intelligent & Fuzzy Systems, vol. 41, no. 5, pp. 5487-5496, 2021

Published: 17 November 2021

Abstract

Human communication is not limited to verbal speech but is infinitely more complex, involving many non-verbal cues such as facial emotions and body language. This paper aims to quantitatively show the impact of non-verbal cues, with primary focus on facial emotions, on the results of multi-modal sentiment analysis. The paper works with a dataset of Spanish video reviews. The audio is available as Spanish text and is translated to English while visual features are extracted from the videos. Multiple classification models are made to analyze the sentiments at each modal stage i.e. for the Spanish and English textual datasets as well as the datasets obtained upon coalescing the English and Spanish textual data with the corresponding visual cues. The results show that the analysis of Spanish textual features combined with the visual features outperforms its English counterpart with the highest accuracy difference, thereby indicating an inherent correlation between the Spanish visual cues and Spanish text which is lost upon translation to English text.

See More

See More

See More

©2023 Tulika Banerjee

©2023 Tulika Banerjee

©2023 Tulika Banerjee